GSOC 2017 - Week 4 of GSoC 17

Published:

This blog is dedicated to the third week of Google Summer of Code (i.e June 24 - July 1). This week was concentrated on cross-testing and analysis of the API with some challenging tests.

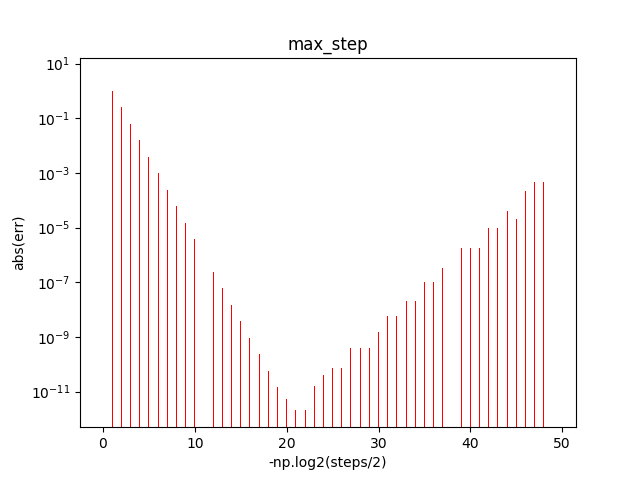

Plot of Error v/s Steps :

For ‘max_steps’:

base step = 2.0

step_ratio = 2

num_steps = 50

The plot is as shown below. Initially the steps are large and hence the error is substantial due to the formula error. As the steps reduces, the error reduces. After some steps, the error again starts increasing due to the presence of subtraction error.

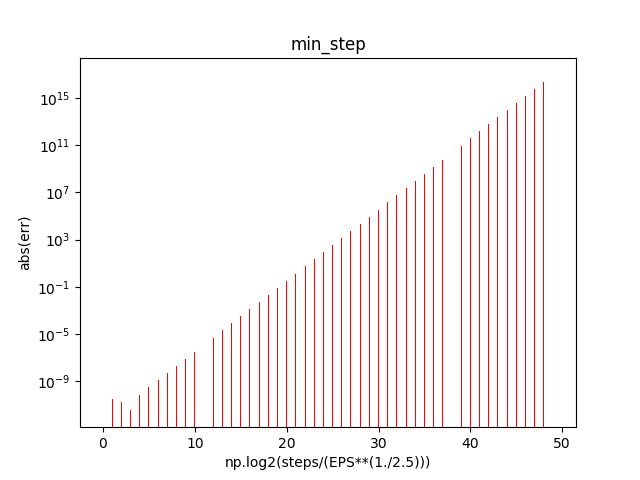

For ‘min_steps’: base_step = (epsilon)^(1./2.5)

step_ratio = 2

num_steps = 50

The plot is as shown below.

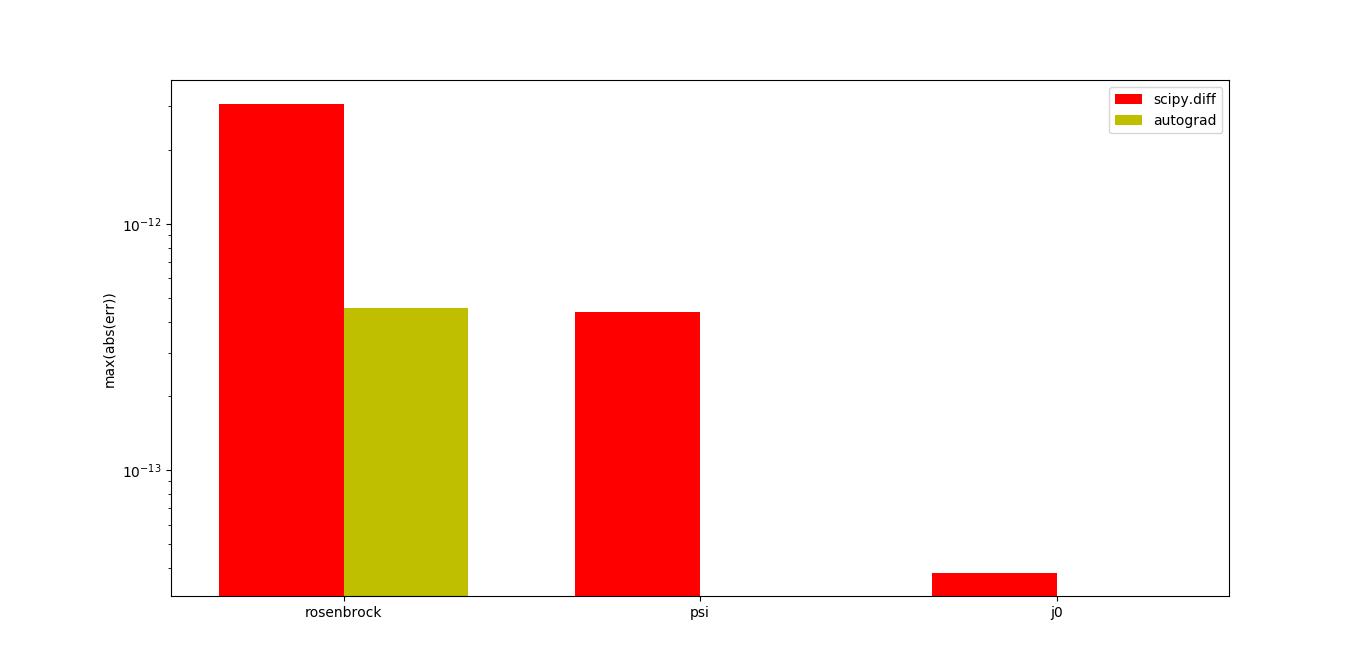

## Comparison with autograd :

Autograd is a python library which computes derivative using automatic differentiation. In order to cross-test and analyze the scipy.diff, functions from scipy.special and scipy.optimize were used. The accuracy results are as follows:

Scipy.diff is less accurate than autograd because of the difference in technique that autograd and scipy.diff uses for computing the derivatives. However, scipy.diff is more versatile and can work on more complex functions.